Personal data should stay where the person can see it. The default

posture of modern software is the opposite — your files are the

fuel, the cloud is the engine, and you are the exhaust. BaseVault

inverts that. Your machine is the engine; the cloud, when used,

is an optional accelerator that has to prove what it's running

before it gets your data.

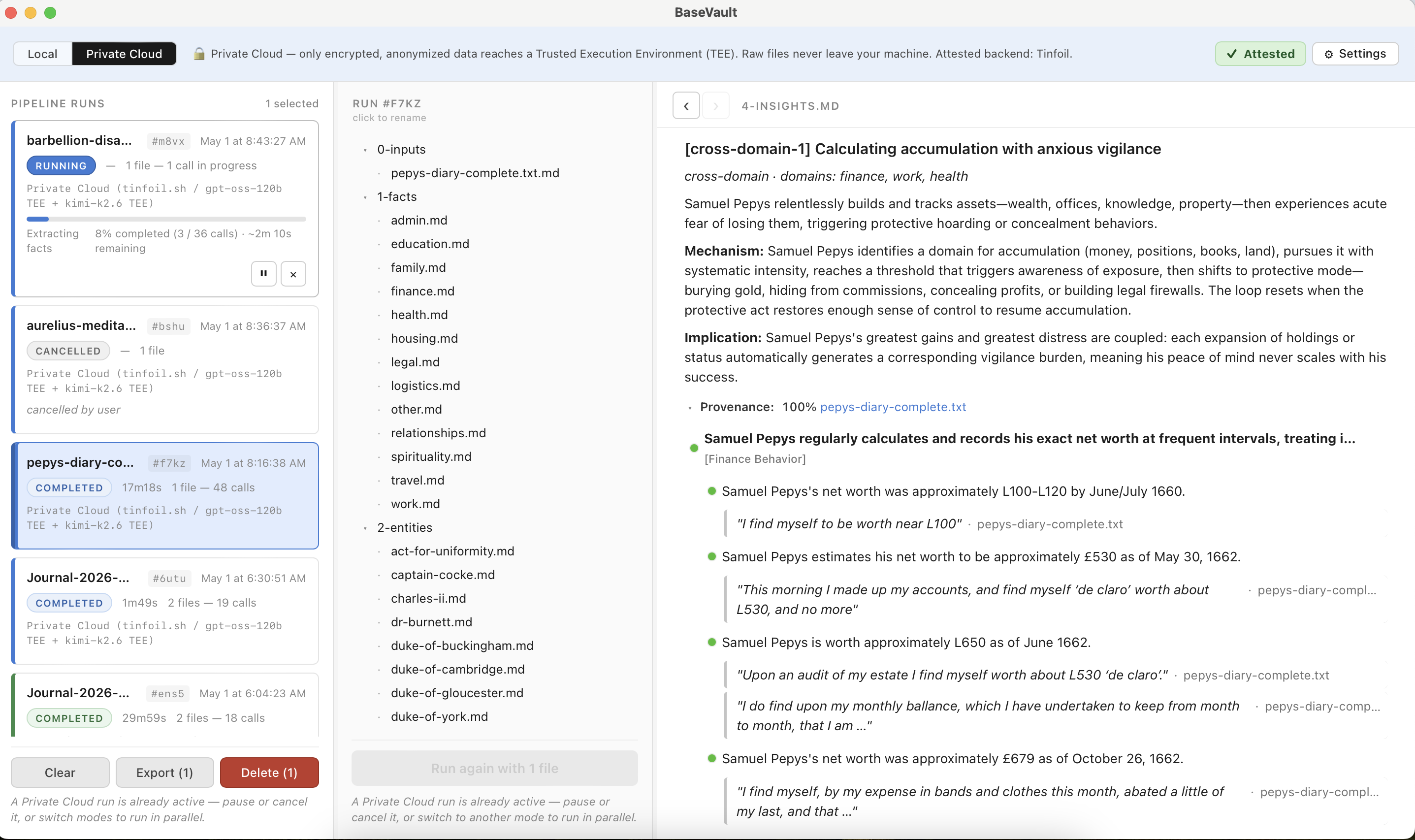

When computation has to leave the machine, the trust model should

replace reputation with proof. "Trust us, we're a good company"

is asking the wrong question. The right question is: show me

the evidence I can verify without having to trust you.

Hardware attestation, transparency logs, and reproducible build

provenance give that evidence. BaseVault wires them end-to-end

and surfaces the chain in a panel you can audit.

Every claim a language model produces should trace back to its

source. Patterns trace to facts. Facts trace to the byte offset

where the underlying quote lives. Insights trace to the patterns

they aggregate. There is no orphan synthesis — if you can't follow

the chain back to evidence, you shouldn't believe the output.

BaseVault enforces this in the data model, not the prose.

No telemetry. No analytics. No surveillance pixel. The app sends

nothing about you, anywhere, unless you've explicitly told it to —

and even then, the destination has been cryptographically verified

to be running the code we expected.

Open source on launch.

Every line of code that touches your data will be public, auditable,

and reproducible. The trust posture isn't a marketing claim — it's

a property you can verify by reading the source.